Simon C. Dominy1, Saranchimeg Purevgerel2 & Kim H. Esbensen3

Quality and sampling error quantification for gold mineral resource estimation

Sampling is a vital component during all stages of the mine value chain. It includes the sampling of in situ material and broken rock for geological, metallurgical and geoenvironmental purposes. Sampling errors are defined in the context of the Theory of Sampling (TOS), where incorrect actions may lead to uncertainty and create a significant overall sampling +measurement error. 1–3 The TOS breaks down this error into a series of contributions along the full value chain (the planning to assay-measurement process). Errors are additive throughout this pathway, unavoidably exacerbating risk. 2,4–6 After collection, sampling errors also occur throughout all subsequent downstream processes contributing to uncertainty in test work and any decisions made thereon. Across the full mine value chain, the sum of these errors generate both financial and intangible losses. In essence, poor quality, non-representative sampling increases project risk and may consequently often lead to incorrect project valuation. There is hardly any other application field where this is as critically important than for Gold mineral resource estimation, because of the very low grades and the extremely irregular mineralisation heterogeneities encountered (Figure 1).

Sampling—the first critical success factor in the mine value chain

The data produced must be fit-for-purpose to contribute to mineral resources/ore reserves reported in accordance with the 2017 PERC7 or other international codes. Quality assurance/quality control (QA/QC) is critical to maintaining data integrity through documented procedures, sample security, and monitoring of precision, accuracy and contamination. Samples and their associated assays are key inputs into important decisions throughout the mine value chain.

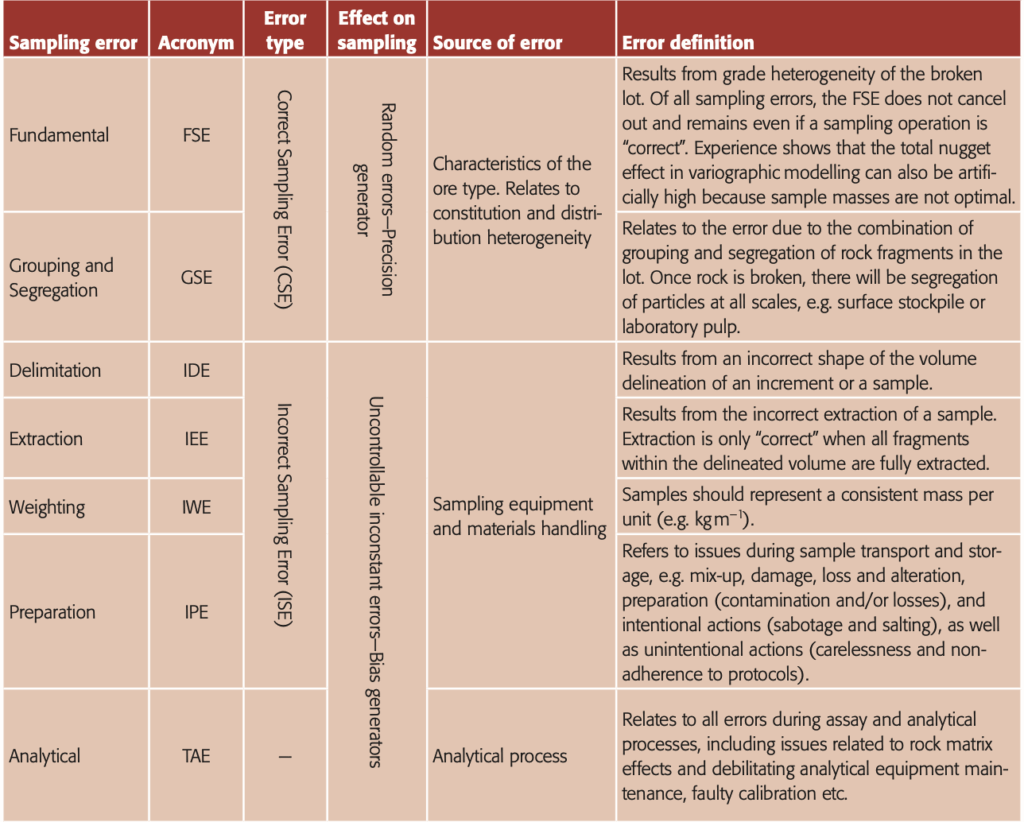

The TOS was first developed in the 1950s by Dr Pierre Gy to deal with sampling challenges in the mining industry, though it has far wider applications today. 1–3 The TOS provides critical guidelines for reducing sampling errors, Table 1.

Quality assurance and quality control

Quality assurance and quality control are the key components of a quality management system. 8–10 Quality assurance is the collation of all actions necessary to provide adequate confidence that a process (e.g. sampling, test work and assaying) will satisfy the pertinent quality requirements. While QA deals with prevention of problems, QC aims to detect these – in time. Quality control procedures monitor both precision and accuracy of samples and data, as well as possible sample contamination during preparation and assaying. Throughout any mineral resource sampling programme, QA/QC is a key activity to determine the imperative of fit-for-purpose samples.

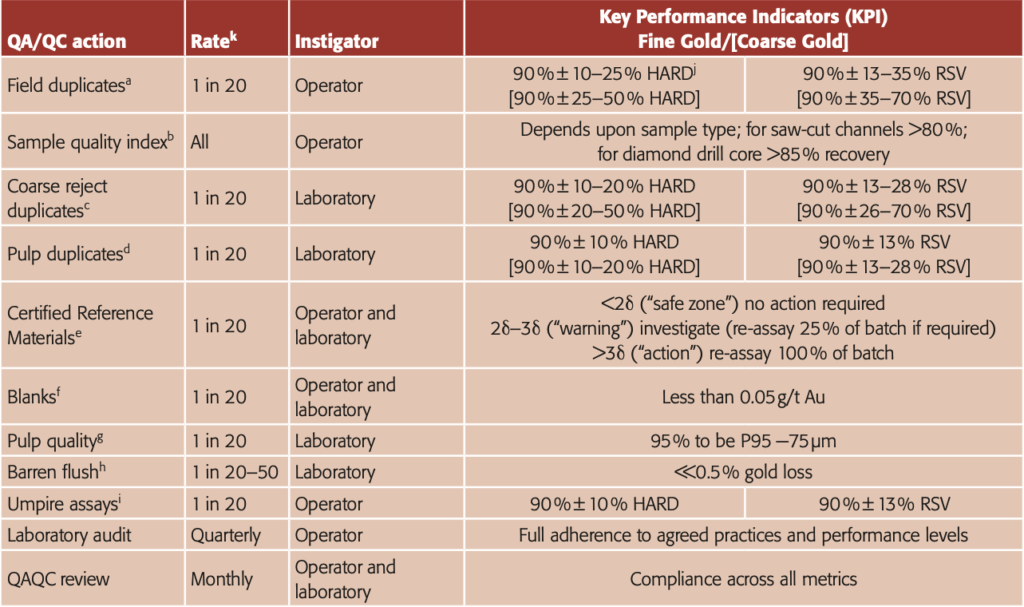

Protocols should be set up to cover: field collection, laboratory preparation and analysis. During grade control, QA/QC should include field duplicates and certified reference material (CRM) submission, e.g. a minimum of three CRMs at a range of grades, including blanks. Laboratory QA/QC shall include internal CRMs, pulp duplicates, umpire sample submission, pulp screen tests and contamination tests. In particular, duplicate field samples provide a measure of variability of the entire sampling and analysis process. Best practice QA/QC is a very comprehensive framework, Table 2.

Documentation of sample collection and laborator y activities is an important part of QA/QC, as is appropriate staff training and monitoring. It is the opinion of the present authors that quality samples only follow from well-trained and experienced personnel. Companies should ensure that all staff involved in sampling activities are appropriately trained in sampling and, during their first few months, have adequate mentoring (sampling QA). This will be additional to other standard operational and safety training. Proper training shall be facilitated bywell-written and illustrated documentation, see examples in Reference 3.

a Applies to any sample type collected.

b Applied to linear and drill samples; KPIs are based on sample type and expected mass.

c Laboratory crusher or reverse circulation (RC) rig rejects.

d Dependent upon nature of ore and assay method. For samples assayed via screen fire assay (SFA), a high precision would be expected for undersize fraction.

e Recommendation to have a minimum of three CRMs at grades ranging from cut-off, ROM and high-grade. For any batch of (say) 20–30 samples, three key CRMs should be added. Note that by their very need to be homogeneous, CRMs do not bear coarse “nuggety” gold, but they can be matrix matched by being quartz-dominated, sulphide-bearing a.o. The laboratory will also insert its own CRMs. CRMs used for SFA process will just be fire assayed. Action is required if 3d breached, usually re-assay of the entire batch if possible.

f Blanks provide a measure of contamination. They should be inserted after expected high-grade and/or visible gold-bearing samples. If substantial visible gold is present, two separate blanks should be placed after the sample. One blank should be added together with the three CRMs per batch. Laboratory will also place blanks into the sample stream.

g Test involves screening or use of an autosizer of the pulp to ensure 95 % passing. All samples should pass or the entire batch should be reground.

h Barren flush may be inserted after each and every sample for coarse gold samples. Assaying of the barren flush; for fine gold ores, a rate of 1 in 50 is appropriate increasing to 1 in 20 for coarse gold ores. Careful management of coarse gold ores is required. It is suggested that laboratories include a “wash” after visibly high-grade (e.g. visible gold-bearing) samples. However, if the ore bears notable coarse gold, then cleaning is best after each sample given that even low-grade samples can bear coarse gold particles.

i Monthly submission of samples (typically pulps), including standards and duplicates is sufficient to provide a check of primary laboratory results. This is especially important where an on-site laboratory is being used as it provides independent confirmation of the results. Where SFA, LW or PAL is used, there may be no pulp residues to submit. In this case, coarse rejects can be used. Umpire samples (e.g. pulps or coarse duplicates) should be supplied to the mine and submitted by mine staff to the umpire laboratory. In some cases, the laboratory (mine or off-site) may submit umpire samples as part of their internal QA/QC.

j HARD is half the absolute difference of the pair divided by the pair mean; HARD value for fine versus coarse gold; HARD can be expressed as RSV, where HARD = √2 / 2 · RSV, e.g. ±10 % HARD is ±13 % RSV.

k It is important to ensure that enough QA data is collected, particularly during a small sampling programme. The rate of insertion of CRMs, blanks etc. may need to be increased beyond the nominal 1 in 20 to achieve a minimum of 10 results.

Training and mentoring should be linked to continuous quality improvement programmes, where protocols are internally and externally audited at least annually. On-going supervision and periodic re-training are strongly recommended, and should always in part be based on practice at the rock face/in the core shed, not only in the classroom.

Quantifying errors along the full sampling value chain

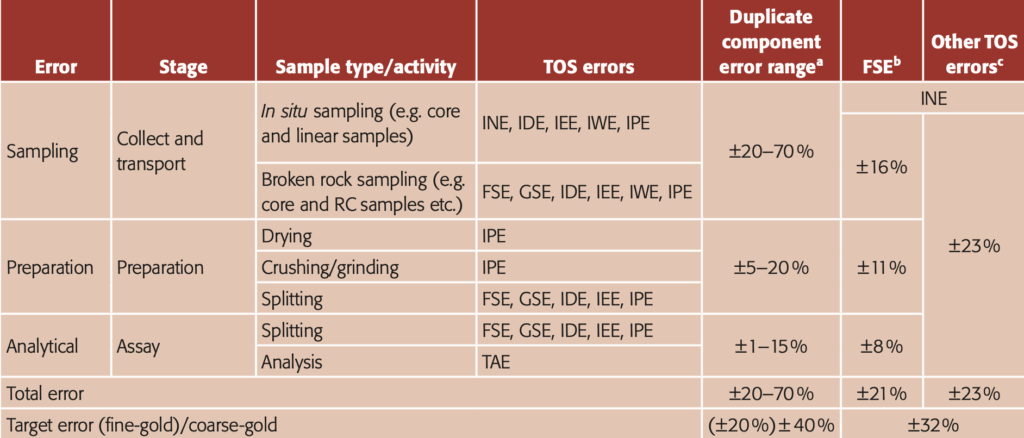

he results of duplicate sampling programmes document the magnitude of errors across the full sampling value chain, Table 3. These generally show that a large component of the total error is introduced during sample collection, especially during primary sampling. As a result, undertaking excessive efforts to reduce errors during preparation and analysis will not necessarily result in a substantive uncertainty reduction. In contrast, the collection of larger, high-quality field samples (for examples using a higher number of increments in composite samples) will result in significant error reduction provided that other protocols are optimised appropriately.

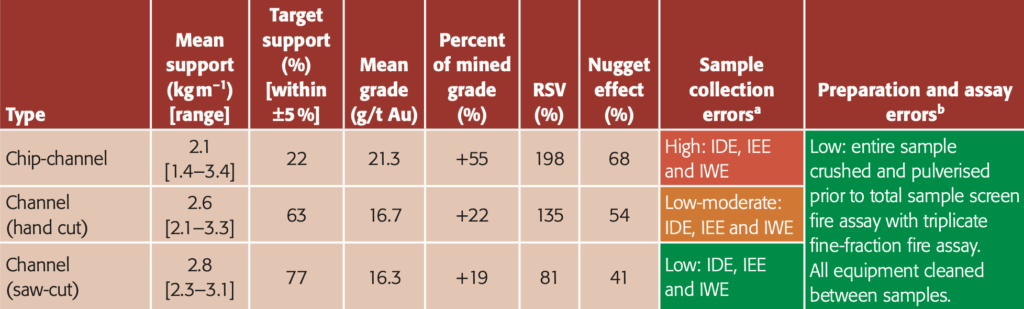

Test work from a Gold vein deposit exemplifies the impact of sampling error through comparison of chip vs channel samples, Table 4. 13 Seventy-five sample triplicates (chip, hand-cut channel and saw-cut channel) were collected from around a 40 m × 20 m stope block (Figure 2). The mineralisation was known to have a moderate variability, containing visible gold up to 1.5 mm in size. The test block was sampled from faces located every

1.5 m along its upper and lower drives and two raises. After cleaning, a reference line was drawn across each face centre and the different types of samples were collected systematically from the bottom up: chip sample, hand-cut and saw-cut channels. The sample delimitation dimensions were estimated and designed to achieve a theoretical sample support of 3 kg m–1. All samples were subsequently prepared and assayed in identical fashion, via a total sample preparation and screen fire assay route. The FSE for this highly optimised protocol, was effectively zero. A QA/QC programme was applied, with all CRMs and blanks within expectation.

These results show a marked reduction in RSV and nugget effect between the three sample sets. The rigorous laboratory protocol and QA/QC indicate that errors within the laboratory were at a minimum. Therefore, the remaining variability relates to the in situ nugget effect and sample collection. The dominant error for the channel samples relates to the in situ nugget effect, given that sampling error was minimal. The dominant difference between the chip and saw-cut channel samples relates to sampling error. These results corroborate many previous findings, showing that saw-cut channel samples provide the best sample quality. Most importantly, this experiment substantiates the critical role of empirical total sampling/preparation/analysis error quantification.

Stage-wise error evaluation

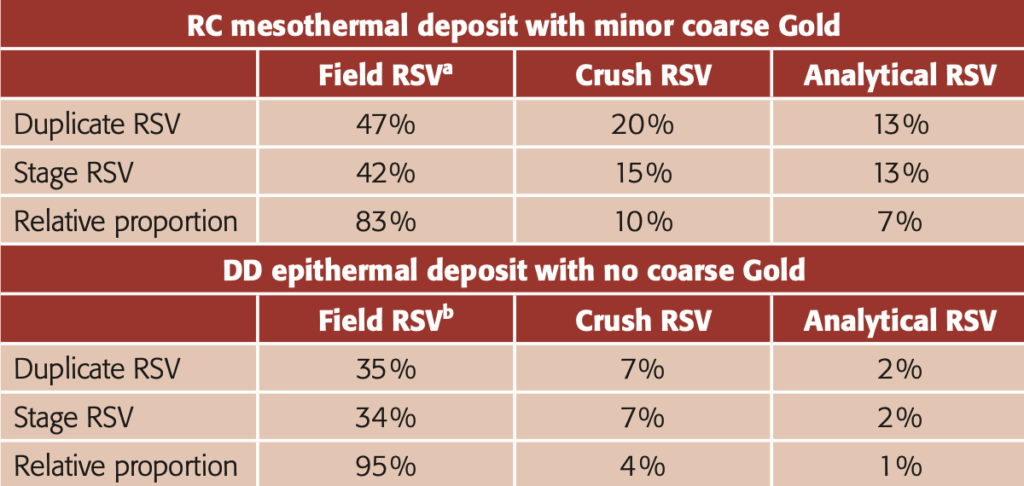

ore detailed error evaluations can be undertaken including each key stage along the sampling value chain. Thus Table 5 shows the results of such an analysis for two contrasting Gold ore types (termed mesothermal and epithermal). In both cases the highest stage error again turned out to be the field RSV, at 42 % and 34 % respectively.

a Potential component error range as determined from duplicate sample (pair) analysis;11

b Maximum recommended FSE distribution across the sampling stages;12

c Maximum recommended other TOS error proportions across the sampling stages;12

RC: Reverse Circulation.

For the epithermal system (no coarse gold), all stage errors were found to be easonable and did not require further action (Figure 3).

For the mesothermal system (coarse gold, i.e. “nugget” gold) both the field and analytical RSVs were deemed high. In order to improve on this situation, the field RSV was attempted to be reduced by taking a larger split at the rig (up from 2 kg to 4 kg) and assaying the entire 4 kg by a more precise analytical method (LeachWELL). Based on initial duplicates from the revised protocol, the field RSV was now reduced to 36 % and the analytical RSV 4 %, now acceptable for a coarse gold mineralisation.

There is a need, and a clear advantage, in moving towards full quantification of errors for objective QC assessment, where a first step is the application of the RSV sampling + analysis variability characteristic as defined in DS3077.3,14 Resolution of individual relative errors across the complete sampling, preparation and analysis stages can be gained from simple duplicate sample pairs, as evidenced by Table 5.

Gold – always special

For Gold resource estimation, special issues are about, compared to many other materials and commodities. Thus deliberately strenuous practical measures are recommended to reduce the risk of tampering of samples. These could include: maintaining increased security between the sample site (e.g. mine face and drill rig) and sample transport and careful recording of who has access to samples between collection and shipping, and maintaining a copy of that record.

a Rig duplicate

b Core half duplicate.

Conclusions

eologists and analytical chemists must acknowledge the systematic rigour of the TOS framework and should readily be able to appreciate the help from proper management of all associated errors.

Empirical error estimations of all stages involved in the complete “from-lot-to-aliquot” pathway demonstrated above and the value of the critical information gained has been laid out in no uncertain way. Where samples are analysed to support any resource estimate, a QA/QC programme must be introduced to ensure continuous quality information of both sampling and assaying. Written protocols and procedures, staff training, periodic auditing of protocols and people, and re-training are all required. DS307714 provides a framework on how to produce transparent protocols regarding the specific sampling pathway. There are many QA/ QC frameworks that can be applied—more on this latter issue in later Sampling Columns.

References

[] P.M. Gy, Sampling of Particulate Materials: Theory and Practice. Elsevier (1982).

[] F.F. Pitard, Theory of Sampling and Sampling Practice. CRC Press (2019 ). https://doi.org/10.1201/9781351105934

[] K.H. Esbensen, Introduction to the Theory and Practice of Sampling. IM Publications Open (2020). https://doi.org/10.1255/978-1-906715-29-8

[] R.C.A. Minnitt, ”Sampling: The impact on costs and decision making”, J. South Afr. Inst. Min. Metall. 107, 451–462 (2007).

[] S.C. Dominy, “Impor tance of good sampling practice throughout the gold mine value chain”, Min. Tech. 125, 129–141 (2016). https://doi.org/10.1179/1743286315Y.0000000028

[] J.-M. Rendu, Risk Management in Evaluating Mineral Deposits. Society of Mining, Metallurgy and Exploration (2017).

[] PERC, Pan-European Standard for Reporting of Exploration Results, Mineral Resources and Reserves. The Pan-European Reserves and Resources Reporting Committee (PERC) (2017).

[] M . A . Vallée, “ Sampling quality control”, Explor. Min. Geol. 7, 107–116 (1998).

[] M.Z. Abzalov, “Quality control of assay data: a review of procedures for measuring and monitoring precision and accuracy”, Explor. Min. Geol.3–4, 131–144 (2008). https://doi.org/10.2113/gsemg.17.3-4.131

[] A . Simon and G.Gosson, “Considerations on quality assurance/quality control and sample security”, in Proceedings of the Sampling Conference, Perth, Australia, 27–29 May 2008. Australasian Institute of Mining and Metallurgy, pp. 135–140 (2008).

[] C.R. Stanley and B.W. Smee, “Strategies for reducing sampling errors in exploration and resource definition drilling programmes for gold deposits”, Geochem. Explor. Environ. Anal. 7, 329–340 (2007). ht tps://doi.org/10.1144/1467-7873/07-128

[] F.F. Pitard, “Guidelines for acceptable allotted sampling uncertainty”, in Proceedings of the World Conference on Sampling and Blending. Gecamin, Santiago, pp. 89–98 (2013).

[] S.C. Dominy, H.J. Glass, L. O’Connor, C.K. Lam, S. Purevgerel and R.C.A. Minnitt, “Integrating the Theory of Sampling into underground mine grade control strategies”, Minerals 8(6) , 232 (2018) . https://doi.org/10.3390/min8060232

[] DS3077. Representative Sampling – Horizontal Standard. Danish Standards Foundation (2013). www.ds.dk