Kim H. Esbensen1

Danish geology icon Arne Noe-Nygaard picks up on an 800 years old sampling and invents the Replication Experiment: PAT in disguise

This column showcases the extraordinarily versatile Replication Experiment (RE). Although presented and illustrated before within the professional sampling community, there are still many cases showing inspiring, didactic applications allowing a broader view on the types of “analysis” associated with sampling. Although so-called “economic geological processes” are of key importance within the traditional field(s) of sampling (TOS), i.e. mineralisations, ore exploration and mining, minerals processing, the column author and editor, here drags the reader into a realm very rarely visited in the sampling realm—academic geology. The present case could just as well have been termed “Danish medieval churches meet inspiring geologic icon inventing the RE independently of the TOS”.

Arne Noe-Nygaard, Danish geologist (1908‒1991)

Noe-Nygaard was a Nestor in Danish and Scandinavian geology through a long and very productive academic career. He was a professor for 40 years, also widely involved in popularising geology and was intimately involved in the founding of The Geological Survey of Greenland (GGU, now GEUS). His biography in Wikipedia is unfortunately only in Danish, but visit it anyway—lots of geology is communicated in pictures, images and maps, and his extensive oeuvre is liberally written in English and German, scientifically spanning from the Pre-Cambrium era in Greenland and Denmark to the present (the Quaternary) with a focus on volcanology in Iceland, Greenland and the Faroe Islands, as well as many other topics, one of which is presented in the present column.

A most unusual sampling setting

The present exposé is based on what turned out to be Noe-Nygaard’s last book publicat ion, titled Larvikitter i Kvaderstenskirke (DGU Publ. 1991) ISBN 87-88640-74-4 [Larvikites in hewn stone churches].1

Barely of book size (only 32 pages), it tells a fascinating geological detective story about the provenance of wall rocks in Danish medieval stone churches in northern and western Jutland. As the name implies, this type of church is built by square hewn rocks of local origin from the local landscape in medieval times. But their ultimate origin is much older—and this is the red line of this column.

The Romanesque hewn rock churches in Jutland were constructed in the period 1100‒1200. There are still some 700 of them in a reasonably wellpreserved state. In fact, this type of church is rather unique for the northern and western parts of Jutland, hardly found anywhere else in the world.1,2 It is the professional historical view that the source for the rocks used for the original church building is local, i.e. they represent the surrounding landscape from where they were transported as short distances as possible before being hewn, probably at the church site. It is easy to compensate for later alterations and additions regarding improvements and modifications often with a distinct later architectural style, e.g. as seen in Figures 2 and 3 (enlargement of windows, lead roofing and addition of a bell tower). Compensating for this, the geologist Noe-Nygaard shared the belief that most of the original church walls in northern and western Jutland represent a wellpreserved sample of the local rocks found on the surface at the time of building.

But why, and how did the medieval landscape come to be strewn with an abundance of boulders and rocks of a size that would suffice well for production of hewn rocks? This is where an underlying relationship between geology and religion has its origin. It is a fascinating story that involves “erratics”…

Erratics—composition, origin, glacial transportation

Of the use in everyday language (Meriam‒Webster) has the following to say: “Erratic can refer to literal ‘wandering’. A missile that loses its erratic path, and a river with lots of twists and bends is said to have an erratic course. Erratic can also mean ‘inconsistent’ or ‘irregular’. So, a stock market that often changes direction is said to be acting erratically; an erratic heartbeat can be cause for concern; and if your car idles erratically, it may mean that something’s wrong with the sparkplug wiring”.

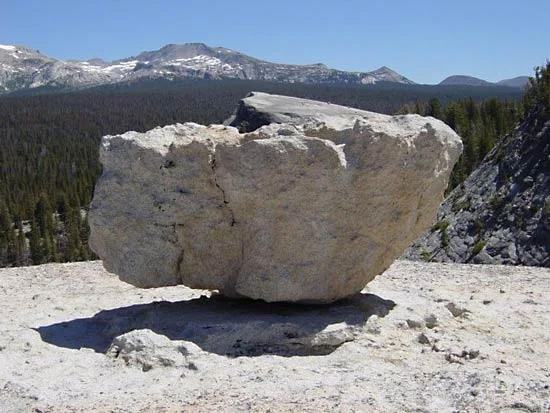

In geology, however, this term is distinctly specific. Here erratic is used in one particular sense only, regarding composition, provenance and direction and distance travelled. Glacial erratics are stones and rocks that were transported by a glacier and were left behind after the glacier melted and retreated. Thus, glacial erratics were formed by erosion (“plucking”) as a result from the flowing movement of ice over the local bedrock. Such erratics can range in size from pebbles to large boulders and can have been carried for hundreds of kilometres (800 km is an often quoted maximum). Scientists have a.o. used erratics to help determine ancient glacier movement(s), i.e. directions, distances and other local features. Particularly large erratics end up as marked landscape elements, Figure 4, sometimes associated with much later local historical lore.

Of specific interest to the uninitiated reader, and directly related to the story in this column, is the fact that erratics differ in composition and hence in appearance from the local bedrock upon which they are found; of course, mostly clear to the trained geologic eye. Erratics may be embedded in the finegrained, ground up glacial deposits (called till), or, more often, occur as conspicuously independent “special” landscape elements on the bare ground surface.

Those transported over long distances generally consist of rock resistant to the shattering and grinding effects of glacial transport. Erratics composed of unusual and distinctive rock types can, by diligent and competent geologists, sometimes be traced to their source of origin and thereby serve as indicators of the direction of glacial movements. Studies making use of such indicator erratics have provided information on the flow paths of the major ice sheets in the ice age(s) of our planet (and indeed also on occasion the location of important mineral deposits). Erratics played an important part in the initial recognition of the most recent ice age(s) and their extent. Originally thought to be transported by gigantic floods or by ice rafting, erratics were first correctly explained in terms of glacial transport by the Swiss‒ American naturalist and geologist J.L.R. Agassiz in 1840.

For more information, see the comprehensive entry on glacial erratics in Wikipedia: https:// en.wikipedia.org/wiki/Glacial_ erratic. In this widely covering entry on glacier-borne erratics, a wealth of examples are described, from Australia, Canada, Estonia, Finland, Germany, Republic of Ireland, Latvia, Lithuania, Poland the United Kingdom and the USA. Curiously, however, there is a distinct lacuna: Norway and Denmark are completely missing, which is a major affront to geologists from these two countries, something to be rectified with a friendly vengeance below!

Zooming in…

The reader is now in possession of the necessary subject-matter background for the denouement of this column. Here are the telling detective clues:

1) Larvikite is a distinct igneous rock formed by solidifying magma, not as a lava, but as a deep-seated intrusive magmatic body in the Earth’s crust. Figure 8 below also shows the source area of known larvikite occurrences in Norway. Igneous rock types are named after the location of the occurrence of the type rock where and when it was first described scientifically.

A few facts of interest:

1) Larvik (https://en.wikipedia.org/wiki/Larvik) is the birth town of the world renowned Norwegian explorer and historian Thor Heyerdal of Kontiki expedition fame.

2) The author of the present column also resided in Larvik for an extended period of time (1980‒2000), from which grew a fascination with the particular rock type in question here. The city itself is immensely proud of its world renowned resources of dimension stone, in the form of polished façade rocks, a major export asset.

3) For a thorough description of the geology of larvikite, the comprehensive publication by Heldal et al. (2008) which, although written for professionals, can also be browsed with pleasure by interested parties: https:// www.ngu.no/upload/Publikasjoner/Special%20publication/SP11_02_ Heldal.pdf

2) There are no occurrence of bedrocks of the larvikite type in Denmark—none!

3) But, very many hewn rock churches in the northern and western-most parts of the Jutland peninsula of Denmark contain a definite, identifiable proportion of larvikite rocks in their makeup. There are actually seven recognisable sub-types (varieties) of larvikite involved, which is for the professional geologists to keep track of, but no worries: Noe-Nygaard knew his larvikites!

4) So how come there were decidedly non-native, indeed “erratic” rock types to be found in the walls of medieval churches in Jutland? This was a major mystery at the time when the science of geology was developing in the 19th century. For example, it was suggested that major floods could have been responsible for such marked dislocations, but after the Agassiz breakthrough (1840), a modern understanding was quickly worked out: in earlier times large(r) parts of the continents in the northern hemisphere were covered (one, or several times) by thick sheets of ice, glaciers (really thick ice sheets, e.g. up to 3 km as in the present day inland ice sheet covering Greenland). Erratics were now envisaged as having been transported by the internal flow of ice masses during a specific (or possible recurrent) glacier event(s) during a specific ice age. An important part of this development is concerned with the evidence and the relics left by scouring ice flows interacting with the bedrocks over which it flows, plucking, plucking …). There is an absolutely overpowering force at work at the bottom of thick ice flows.

5) So, it is no longer a mystery that, for example, larvikite erratics can now be found in Denmark several hundreds of km south of their point of origin; this picture is today well known and accepted. But the details of filling out this broad framework still leaves a lot of complex and highly fascinating questions, answers to which have been worked out by later generations of geologists, and this is where the legacy of Arne Noe-Nygaard’s last book comes to the fore. Questions like from which of the three major ice ages that can be recognised in Denmark did this erratic complement of surfacefound stones originate? (There are several other, intricate details involved here, which find their resolution at the end of Noe-Nygaard’s account, but these can safely be left to the professional connoisseurs of Quaternary glacial geology). Here we leave such particulars and move fast forward to sampling and analysis in this fascinating context.

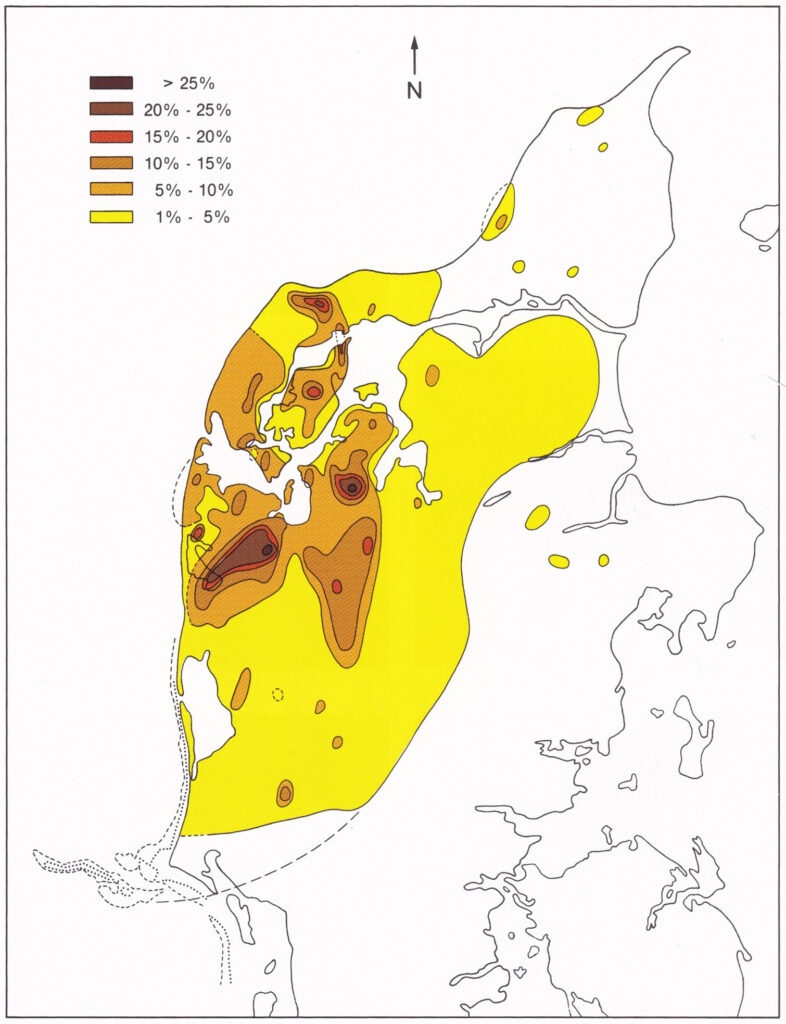

6) Noe-Nygaard’s book gives readers a highly personal tour de Jylland in the form of numerical accounts of the assemblages of hewn rocks to be found in the makeup of the walls of the gamut of Roman churches, broadly constructed in the period 1100‒1200. The final result of Noe-Nygaard’s investigation is reproduced below as Figure 7, to be further commented upon.

In medias res: sampling and analysis

So, what kind of sampling was used in this story? And what kind of analysis?

One could perhaps imagine that church wall rocks were sampled in the traditional field geological sense with “field samples” brought to the laboratory for petrological, mineralogical and geochemical analysis with a view of identifying the different type of larvikite rocks and thus their proportions of the complete hewn rock church assembly. But no, the story is more interesting, and far more personal in a unique sense. In today’s sampling and analysis terms as used in science, technology and industry, Noe-Nygaard unknowingly made use of what today is known as a “PAT-approach”, although the concept of Process Analytical Technology was not to be established until years later than Noe-Nygaard’s first field investigations.

A PAT aside

The key aspect of PAT is to perform sampling and analysis in one-andthe- same-operation. Within PAT the focus is nearly always on the many contending analytical modalities competing for attention and each claiming superiority, but there is also an underlying, unfortunately often unrecognised challenge, related to the role of the sampling interface.

The key characteristic of PAT is deployment of sensor technologies (physical probes, chemical sensors, other sensors) intercepting and interacting with a process stream. The key characteristic of PAT is that of performing sampling and analysis simultaneously as one unified process: probes and sensors interact analytically with an often small (sometimes minute) “effective volume” of the flux of matter which represents the support volume from which analytical signals are acquired. This is very often in the form of multi-channel spectroscopic signals, which can be transformed into a predicted chemical or physical measurement, see, for example, the fundamental textbook by Katherine Bakeev, Process Analytical Technology,3 in which chemometrics has made essential contributions by deploying the powerful multivariate calibration approach, e.g. Esbensen & Swarbrick.4

Methods and equipment of process sampling are front and centre in the realm of the Theory of Sampling (TOS). The TOS supplies a comprehensive, well-proven framework that derives all principles and implementation demands needed for how to extract representative physical samples from moving lots, i.e. from a conveyor belt or from ducted material streams. PAT aspires to take this situation over to the situation in which the task is how to extract representative sensor signals instead of physical samples.

For “sensor sampling”, i.e. PAT, there is no similar foundational framework. Instead, a pronounced practical approach is evident in this realm, in which the question of how to achieve representative sensor signals is not so much related to the design and implementation of an appropriate sampling interface between the sensor and the streaming flux of matter. Rather, a survey of the gamut of sensor interfaces presented in industry and in the literature reveals a credo that appears to be: “Get good quality multivariate spectral data—and chemometrics will do the rest”, exclusively relying on multivariate calibration of process sensor signals (multi-channel analytical instruments). There is a tacit misunderstanding that the admittedly powerful chemometric data modelling is able to take on and correct for any kind of sensor signal uncertainty—including “sampling errors”. However, this leaves analytical representativity the victim of imperfect under signals, which can be transformed into a predicted chemical or physical measurement, see, for example, the fundamental textbook by Katherine Bakeev, Process Analytical Technology,3 in which chemometrics has made essential contributions by deploying the powerful multivariate calibration approach, e.g. Esbensen & Swarbrick.4 Methods and equipment of process sampling are front and centre in the realm of the Theory of Sampling (TOS). The TOS supplies a comprehensive, well-proven framework that derives all principles and implementation demands needed for how to extract representative physical samples from moving lots, i.e. from a conveyor belt or from ducted material streams. PAT aspires to take this situation over to the situation in which the task is how to extract representative sensor signals instead of physical samples. For “sensor sampling”, i.e. PAT, there is no similar foundational framework. Instead, a pronounced practical approach is evident in this realm, in which the question of how to achieve representative sensor signals is not so much related to the design and implementation of an appropriate sampling interface between the sensor and the streaming flux of matter. Rather, a survey of the gamut of sensor interfaces presented in industry and in the literature reveals a credo that appears to be: “Get good quality multivariate spectral data—and chemometrics will do the rest”, exclusively relying on multivariate calibration of process sensor signals (multi-channel analytical instruments). There is a tacit misunderstanding that the admittedly powerful chemometric data modelling is able to take on and correct for any kind of sensor signal uncertainty—including “sampling errors”. However, this leaves analytical representativity the victim of imperfect understanding of the nature of data analytical errors (ε) vs sampling errors (TOS errors).

In the current PAT focus, representativity is wholly related to spectral and reference sample measurement uncertainty (MU) and to possible data modelling errors, which unfortunately ignores the geometric specifics of sensor signal acquisition in relation to the full cross-section of the streaming/ ducted flux of matter even though this is the very domain where sampling errors occur in the exact same fashion as when extracting physical samples. The process sampling interface comes to the fore.

And this PAT framework relates to the rock assemblages in medieval Danish churches 800 years old—how?

Unknowingly, Arne Noe-Nygaard devised a quite similar simultaneous sampling and analysis approach, in his case in the form of field sampling and analysis all in one. But interesting, his field sampling was not the traditional geological sample collection for analysis in the laboratory.

Field rock identification: field sampling and analysis in one!

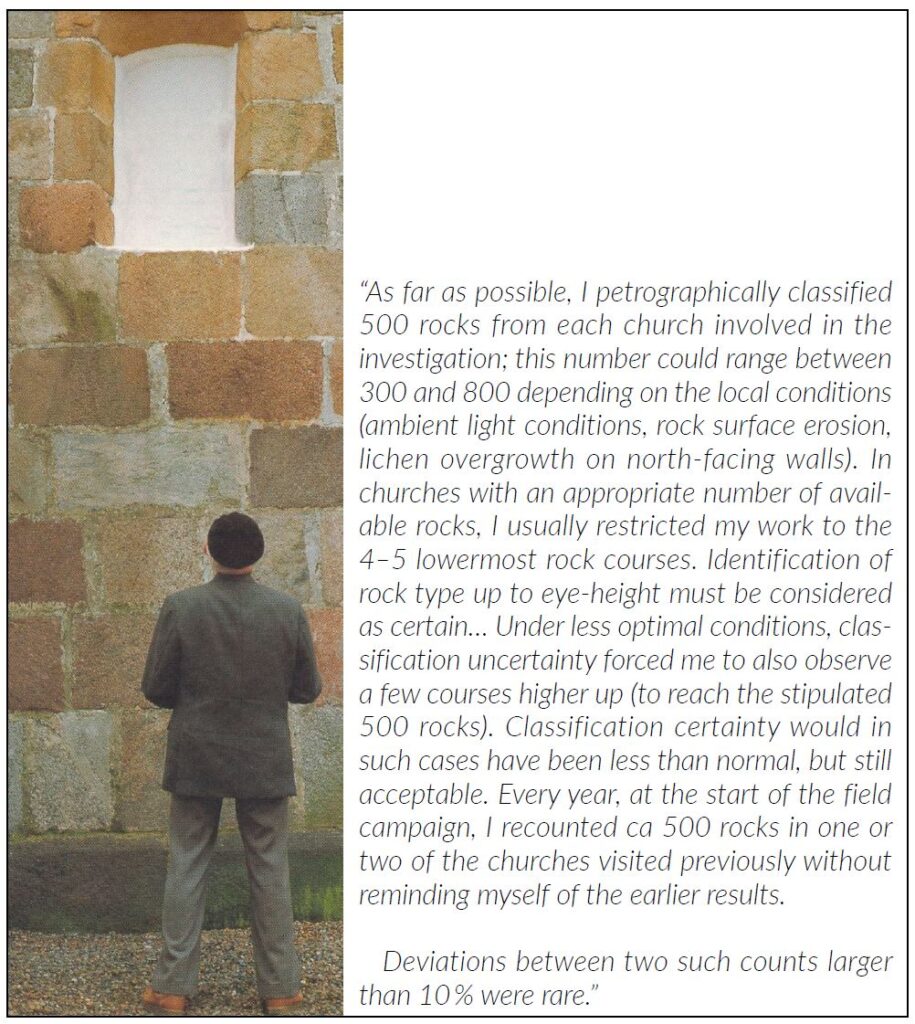

So here is how Noe-Nygaard went about his analysis, i.e. visual rock type identification (aka “rock classification”), based on decades of experience with this kind of rock in Scandinavia. Noe-Nygaard was a very experienced geologist able to recognise all the seven major kinds of syenitic rocks making up the family of larvikites.

And now the story gets historical. The field sampling part (gathering the local surface rocks from the landscapes in Jutland) was undertaken by the original medieval church builders, who, with absolute certainty, were inspired and driven by very different motivations than science—masonry has it origin in the religious wish to build churches in which to worship. It was Arne Noe-Nygaard’s inspired geological brilliance to make explicit this hidden sampling aspect of medieval church building.1,2 Sampling by religious proxy! Thus, each medieval church takes on the role as a (rather large) sample of local landscape boulders, the size of which amounts to the cumulative wall area of the lowermost 5‒7(8) rock courses. In passing (a treat for TOS experts), one observes that samples of this type are comprised by very, very large “particles”, making it imperative to be able to obtain a large enough square footage—the stated minimum of ca 500 rocks (see magazine front cover for a deliberate pointed focus).

Then, with a delay of some 800 years, fast forward to “analysis”— field rock identification, Figure 6.

From this field geological rock identification, the proportions of each larvikite rock (and, therefore, also their cumulative count) could easily be calculated as relative % w.r.t. all rocks counted for each church, which results were then plotted on a geographical map of Jutland, Figure 7.

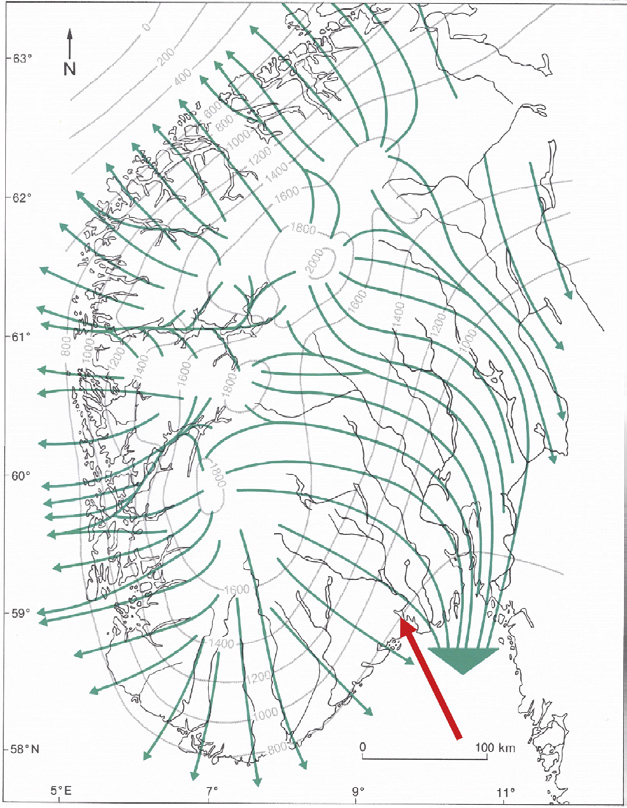

To close the geological part of the story, Figure 8 shows the most recent ice age glacial flow direction patterns in southern Norway. For the reader not familiar with the geography and Quaternary geology of Scandinavia, Denmark is situated some 200 km south of the Norwegian glacial flow field shown. Herewith the connection between identifiable, diagnostic erratics from the area surrounding Larvik in southern Norway and medieval church rock assemblages in Jutland, Denmark, should be fully established and understandable for all, no specialised geological competence needed.

The TOS point: the RE

The point to this extensive geological introduction is the key theme of this sampling column, novel applications of the RE.

Noe-Nygaard was acutely aware that there was an inherent “analytical error” involved in his visual identifications (TAE in today’s parlance of the TOS). Such was his awareness of his analytical performance that he devised his own RE. A translation (KHE) from the Danish in Noe-Nygaard (1991) is presented in Figure 6.

This is it! What a wonderful example of a conscientious scientist, aware that his professional classification performance (analytical performance) is associated with a significant non-zero uncertainty that must be considered. What is remarkable here is that, for geologists, the ability to identify rock types (and mineral species) is a matter of intense professional pride—this is what distinguishes a competent field geologist. One does not question a geologist’s rock identification competence!

And yet, in spite of his very impressive academic a.o. achievements, Arne Noe-Nygaard’s example of professional self-awareness is a remarkable, humble reminder to all scientists, technologists and samplers of today!

But it is never an easy matter following the footsteps of a giant, Figure 9, not even for a geologist familiar with igneous rocks and who has lived 10 years in Larvik! A first foray comparison of performance uncertainty (RE%), performed during a summer 2019 vacation tour in Jutland taking in a number of beautiful medieval country churches, revealed just how good Arne Noe-Nygaard was to his metier. To be honest, and to Noe-Nygaard’s legacy, his “<10 %” RE uncertainty vastly outshined the score for the hopeful contemporary geologist in Figure 9 (IF the reader must ask, the answer is “a considerable larger percentage”).

Conclusion

The RE is a very versatile facility for evaluating the total uncertainty [TSE + TAE] of any measurement system in which sampling plays a role. While RE has a plethora of manifestations within traditional sectors in technology, industry, commerce, trading and society, this column treated an unusual application of RE thinking hidden away Figure 9. “So how difficult can it be?” if one believes one is familiar with syenites from southern Norway, that is. The author of this column could not resist this temptation when driving past an especially inviting hewn rock church during a summer holiday in 2019. Not surprisingly, it turned out to be quite a challenge to even try to best the master geologist Noe-Nygaard, RE < 10 %. in a most unsuspected niche in academic geology. A famed Danish geologist devised his very own PAT-like sampling-analysis confluence spanning no less than 800 years. What’s not to like?

References

[1] A. Noe-Nygaard, Larvikiter I Kvaderstenskirker. Danmarks Geologiske Undersøgelse, Miljøministeriet, Editor Stig

Schack Pedersen (1991). ISBN 87-88640-74-4 [Danish]

[2] A. Noe-Nygaard, Kirkekvader og Kløvet Kamp. Gyldendal (1985). ISBN 87-00-83014-3 [Danish]

[3] K. Bakeev, Process Analytical Technology, 2nd Edn. Wiley (2010). ISBN 978-0-470-72207-7

[4] K.H. Esbensen and B. Swarbrick, Multivariate Data Analysis: An Introduction to Multivariate Analysis, Process Analytical Technology and Quality by Design, 6th Edn. CAMO Software (2018) ISBN 978-82-691104-0-1

[5] A. Nesje, S.O. Dahl, E. Anda and N. Rye, “Blockfields in Southern Norway: significance for the late-Weichselian ice sheet”, Norsk Geolgisk Tidsskrift 68(3), 149‒169 (1988).